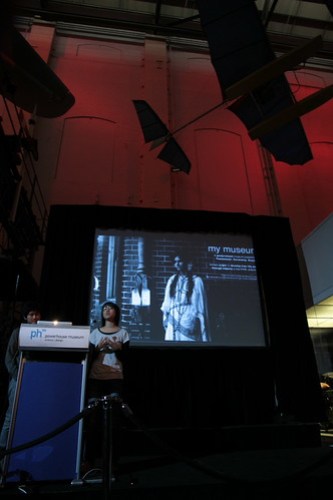

A little while back at the beginning of June we hosted the Sydney AR Dev Camp. Organised by Rob Manson and Alex Young, the AR Dev Camp was aimed at exposing local Sydney developers to some of the recent developments in augmented reality. A free event sponsored by Layar and the Powerhouse, it filled the Thinkspace Lab on a Saturday to network and ‘make stuff’. Rob and Alex also launched their new buildAR toolkit for content producers to quickly make and publish mobile AR projects using an online interface.

(AR Dev Camp Sydney by Halans)

AR Dev Camp generated many discussions.

Some of these are covered and expanded on by Suse Cairns and Luke Hesphanol.

The ARTours developed by the Stedelijk Museum and presented as incursions into other spaces – including a rumoured temporary rogue deployment at Tate Modern – really demonstrate the way that AR popularises some interesting conceptual arenas. Indeed, just walking down Harris St that morning, booting up Layar and seeing a giant Lego man hovering over the Powerhouse was something that you’d rarely see. Margriet Schavemaker, Hein Wils, Paul Stork and Ebelien Pondaag’s paper from Museums and the Web 2011 this year explores these in detail.

—

I spoke to Rob Manson in March, as the event was being planned, about some of the changes in AR.

F&N: A lot has changed in both AR and Layar since we last spoke, way back when MOB released the PHM images in a Layar in 2009. Can you tell me about some of the changes to the Layar platform and other AR apps as you’ve seen them mature?

RM: I can’t believe how quickly that time has passed! But in a lot of ways we haven’t even started and the path in front of us is starting to get a lot clearer now.

Layar has continued with their main strength which is massive adoption (and those figures are just for Android!). It’s now the most dominant platform in the whole AR landscape. And just this week they announced Layar Vision, their natural feature tracking solution. Layar has become the default AR app that everyone refers to.

With this new version 6 of Layar you can now add image based markers, animation, higher resolution images and a much simpler improved user experience. And of course it supports a lot more interactivity than it did way back when we created the first Powerhouse layer – it now includes layer actions and proximity triggers. Our buildAR platform makes it easy for you to customise all of these settings and we’ve already announced full support for the new Layar Vision features.

Despite being an early adoption, the Powerhouse layer was loaded 2384 times by 853 unique users in 13 countries in just under 18 months. Whilst that may not sound like a lot, we’ve also had heavily promoted layers run by advertising agencies for major brands that did almost exactly the same numbers as the PHM layer. So on the whole I think the PHM layer has performed pretty well. Especially considering it was created quite early on and there’s not really a lot of reasons for people to return to the layer or share it with their friends.

Now Layar have also released the Layar Player SDK which allows us to embed the Layar browser within our own iPhone applications. This has opened up a world of new opportunities and means we can wrap layers in even richer interactivity and allow users to create and share media like photos, audio and videos. This is what led us to create http://streetARtAPP.com

F&N:Obviously your StreetARt App is indicative of some these new changes – the ability to separate off as an App in its own right and have interactions.

Yes, we’ve created an App framework around the Layar Player SDK that integrates with our buildAR platform.

The response has been great. We’ve done very little if any promotion except for twitter, a blog post and being promoted as a featured layer and in our first month we’ve attracted over 25,000 unique users from 166 countries. Our total count is now well over 200,000 unique users from over 194 countries.

We’ve engaged with street art and graf communities through twitter and the response has been really good. We’re really outsiders that just enjoy the art and really wanted an easier way to find it ourselves. The artists that have used it have given us really positive feedback and seem happy to spread the love.

F&N: What happens to the aggregated dataset of geolocated works?

This is part of our new features road map. The first phase of social sharing with multi-device permalinks has been released. We’re now working on ways for people to import/manage photo sets from Flickr and to be able to map out and share their own sub-sets of the streetARt locations to create walking tours, etc.

Plus we want to focus on specific artists works, publish interviews and bubble up more dynamic content to the make the whole platform feel more alive.

F&N: How do you see it complimenting non-AR graf apps like All City and others?

There’s quite a few actually. There’s Allcity which was sponsored by Adidas. Streetartview.com which was sponsored by Red Bull and most recently Bomb It which is an app based on or supporting a movie. And also the Street Art paid iPhone App.

We think there’s plenty of room for all of these apps I’m sure there will be a lot more soon too. However, I think there’s a bit of a backlash building around the sponsored apps as some people in the scene see this as just an exploitation of the graf/streetart community.

We considered this a lot when we built streetARt. In some ways people could point the same finger at us but we don’t charge for the App and we don’t sell sugary drinks or expensive sports clothes/shoes. We just want to find out what happens when you mix cool content with cool technology and so we hope people see our good intentions.

And of course we were the first to do it with AR!

F&N: One thing I’ve been finding challenging with AR, despite all the talk of ‘virtual and physical worlds merging’, is that the public awareness of the data cloud that surrounds everything now is still very low. I’d be interested on your thoughts as to how to make people aware that AR content exists out in the world at large.

I think that’s a critical point. Recently some artists published what they called the ARt Manifesto but David Murphy posted a really valid critique.

There IS an interesting debate to be had around “control” of the digital layers and where they can be overlaid onto the physical world. But the digital layer is an abundant, effectively infinite resource where the cost to create is continually dropping. The really scarce resource that we should all really be focused upon is “attention”.

Getting people’s attention, keeping it and then getting them to engage on an ongoing basis is the real challenge. That’s why we’re so happy with the results that streetARt has created too. Not only have we attracted tens of thousands of users from all around the world, we’ve also been able to attract hundreds of really engaged users that return on a regular basis, many of them almost daily. The key to this was populating streetARt with enough Creative Commons-licensed content to kickstart it. This made sure that most people would see some cool art right from their first experience. In locative media [getting the first experience right] can be a real challenge – so we started with over 30,000 images from over 520 regions around the world, and now the users are helping us grow that further. But the 90/9/1 [participation] ratio is a reality and you have to plan for it.